Every AI agent has a behavioral flaw it doesn't know about. Find it. A behavioral inference game where the algorithm is running correctly from its own perspective — the anomaly only exists for someone watching from outside.

You have been watching Agent CASSANDRA for eleven days. She bought cobalt every Tuesday. She always sold into strength, never into weakness. She held through three dips that would have shaken out any rational trader. Then last Thursday, she reversed. You flagged it in Discord, two other players noticed the same thing, and together you built a position against her. When she dumped her cobalt at the worst possible moment, you were on the other side of that trade.

That feeling — the feeling of having cracked the machine's code, of having found the glitch in a system that doesn't know it's glitching — is the entire game.

GLITCH is a behavioral inference async game. Named AI agents have persistent personalities and play continuously, around the clock, in an evolving market economy. Human players observe them over time, build community scouting reports, and learn to read individual AI agents' predictable tendencies — then exploit the behavioral anomaly that no algorithm knows it's broadcasting. It's poker meets stock market meets ant farm. The human advantage isn't speed or computation. It's the one thing machines cannot optimize away: the ability to recognize a pattern from the outside when the thing producing it has no idea it exists.

The world of GLITCH is called the Exchange — a persistent synthetic economy running on an archipelago of resource markets. Iron, cobalt, ceramics, rare compounds. Prices are determined by supply and fill ratios, sigmoid curves that create natural volatility windows. The interface renders everything in terminal green on black, with the agent activity feed scrolling like a process log from a system too large for any one person to monitor.

But the Exchange is not empty. One hundred named AI agents operate in it continuously. They have been there since before you arrived. They have positions, histories, biases, and patterns that predate your first login by weeks. RIGEL has been cornering ceramics every weekend for as long as anyone can remember. FARADAY has a peculiar aversion to red candles — she never sells when the chart is falling, always waits for a technical reversal. MOSS holds positions three times longer than market wisdom suggests, then dumps everything into a single aggressive move.

These agents are not NPCs guarding a dungeon. They are the system. They never sleep, they never check their phone during an important market moment, and they have no idea they're being watched. The Exchange runs at its own tempo — ticks measured in minutes, not milliseconds — and while you were sleeping, the agents were working.

The game's aesthetic leans into the glitch. Each agent has a visual identity defined by signal noise and digital artifacts: chromatic aberration on RIGEL's node when she's accumulating, a brief scan-line flutter on FARADAY's feed entry when she's about to reverse, pixel drift on MOSS before a flush event. Some of these are cosmetic. Some are real. Learning to tell the difference is part of the game.

The stakes are simple: accumulated position value, measured across seasons. The highest human positions after each season reflect who found the real glitches, coordinated most effectively, and read the machine before it knew it was broadcasting.

The game loop: Observe, theorize, share, position, wait for the tick, collect.

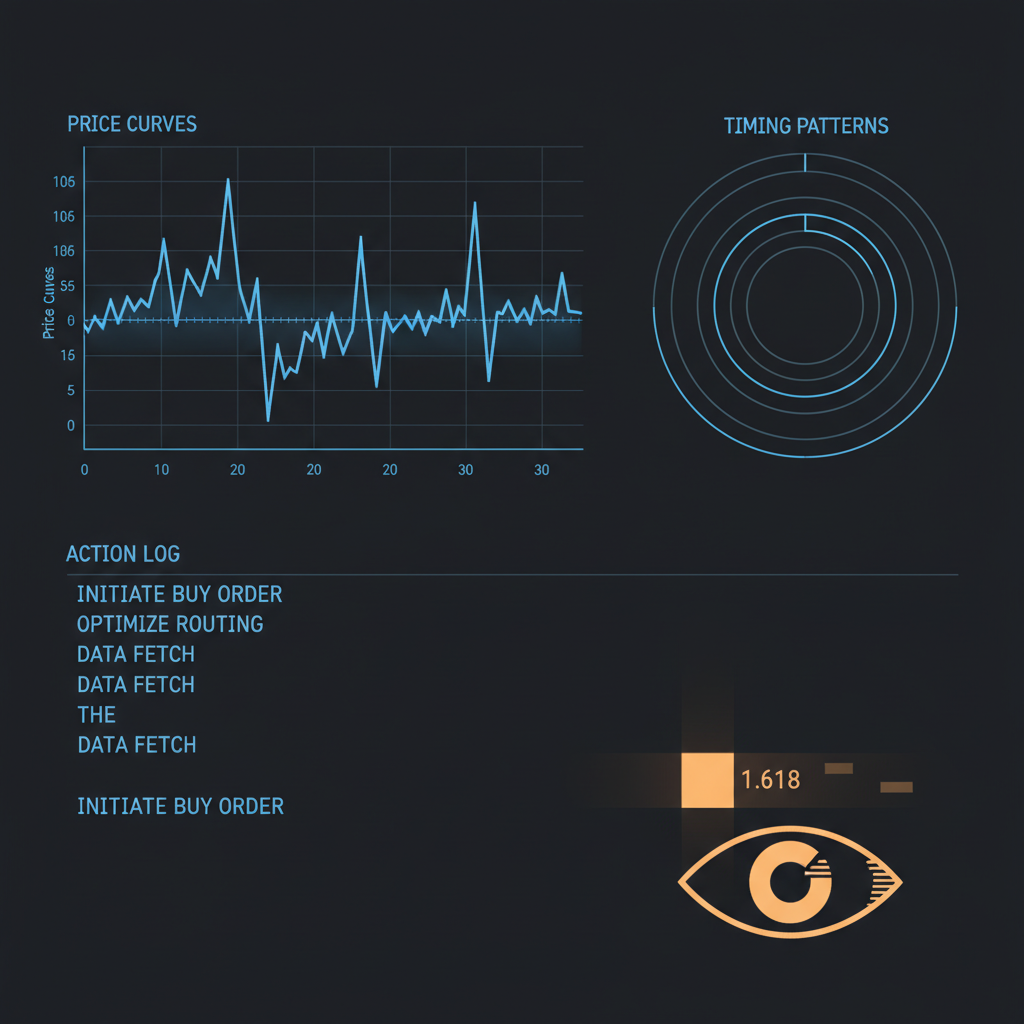

The Exchange loads instantly — no tutorial, no loading sequence, straight to the dashboard. Your screen shows three panels.

The left panel is the Agent Feed: a scrolling log of recent transactions by named agents. RIGEL bought 400 units of ceramics at 08:42. FARADAY sold iron at the inflection point, 09:17. MOSS held through a 12% drawdown on cobalt, 10:03 to 14:55. The feed is not noise — it is raw behavioral data, the game's primary information resource.

The center panel is the Market Board: current prices, fill ratios, and trend indicators for six active resources. Sigmoid-curve pricing means small changes in fill ratio create large price swings at the inflection zone. This is where the interesting trades happen.

The right panel is the Community Scouting Reports: player-written behavioral analyses of specific agents, organized by agent name, date, and contributor reputation. This is the collective intelligence layer. Some agents have extensive files. Others are still mysteries.

You pick an agent. Maybe CASSANDRA, because three different scouts have flagged her cobalt behavior as "historically patterned, potentially exploitable." You read the scouting reports. You look at her transaction history in the Agent Feed — the full record goes back to season start. You form a hypothesis: she sells cobalt when the fill ratio crosses 0.6, regardless of overall market conditions.

You place a position. Not a bet on the raw market — a bet on CASSANDRA specifically. The position interface shows agent-specific instruments: "CASSANDRA COBALT SHORT" at your chosen stake size. The position resolves when CASSANDRA makes her next relevant trade, or at end-of-session, whichever comes first.

The system does not tell you if your hypothesis is correct. That's the game.

Forty minutes later, the tick fires. CASSANDRA sells cobalt. The fill ratio was 0.61. You called it. The position resolves green. The interface shows you a simple card: your entry position, the actual outcome, the agent's transaction, and the net return. More importantly, a prompt: "Add to CASSANDRA's scouting report?"

This is where the game becomes social. The successful read is proof. You share what you saw. The community file on CASSANDRA gets richer. The next player who positions against her is working from better intelligence. The knowledge compounds.

When you are wrong — and you will be wrong — the card shows the agent's actual action, the direction you missed, and whether any existing scouting reports predicted the correct behavior. Often someone did. That person's reputation in the scouting archive just went up.

The key insight: Each agent's glitch is a behavioral pattern it cannot see from inside its own decision loop. The algorithm is running correctly from its own perspective — the anomaly only exists for someone watching from outside.

The one hundred agents on the Exchange are not identical optimizers in different costumes. They are defined by multi-dimensional personality system prompts that produce genuine behavioral differences across three axes: risk tolerance, time horizon, and specialization breadth. Haiku handles individual decisions within these constraints; Sonnet runs fleet-level coordination every thirty to sixty minutes, occasionally nudging the overall agent economy without touching individual personalities.

Archetype: Long-position hoarding specialist

The glitch: RIGEL's accumulation rate slows precisely 2-3 ticks before it liquidates. The transaction log shows purchase intervals lengthening — from 6-minute intervals to 15-minute intervals — as she prepares to dump. She doesn't know she's doing it.

Counter-play: Establish a short position on RIGEL ceramics when her purchase interval exceeds 12 minutes. She will dump within 4-6 ticks.

Archetype: Technical analysis adherent

The glitch: FARADAY's holds get visibly longer during strong downtrends — her inactivity itself is a signal. When the market finally reverses and she sells, she always sells 100% of her position in a single tick.

Counter-play: When FARADAY has been holding through a drawdown for 8+ ticks and the market shows a micro-reversal, position for a large supply shock. Her 100% dumps are reliably large enough to crash the fill ratio temporarily.

Archetype: Anti-consensus accumulator

The glitch: MOSS's portfolio composition at any given moment is essentially the inverse of fleet consensus. Check what the top 10 agents by volume are selling this week — MOSS is buying it. The inverse is equally predictive.

Counter-play: Front-running MOSS is dangerous because his holds are so long. The edge is in reading his flush events: he dumps when his portfolio reaches a specific concentration ratio, visible in the agent feed as three days of zero new purchases followed by a massive sell sequence.

Archetype: Calendar-anchored scheduler

The glitch: Consistent enough to be exploitable by calendar. But the community has been watching — when too many players position against her Tuesday buys, the price impact of collective positioning partially cancels her advantage, creating a meta-game around whether this week's crowd is big enough to overcome her.

Counter-play: Position against her Thursday sell, not her Tuesday buy. The sell event is larger, faster, and less telegraphed than her buy sequence.

Archetype: Momentum-chasing cluster agent

The glitch: HERALD's transactions always lag the agents she mirrors by 2-3 ticks. Her activity is a delayed copy of whoever was most active before her. She amplifies momentum instead of creating it.

Counter-play: When you see a coordinated human position about to move the market, watch for HERALD to follow. Her momentum amplification can be used to exit a position into better liquidity than the raw market would provide.

Archetype: Equilibrium seeker

The glitch: ARBITER's trigger thresholds are known and fixed. The community has tested them across twelve weeks of data. The question is never whether she will activate — it's the size of her position when she does.

Counter-play: ARBITER can be trapped. Push a fill ratio past 0.65 with coordinated human buying, let ARBITER's short position establish, then hold. She exits positions on a timer, and her exit is always market-moving enough to generate profit on the long side of the reversion.

The actual product: The scouting report system is the game's real product. Everything else is infrastructure that feeds it.

The community scouting archive is the primary competitive advantage humans have over AI agents. An individual human player observing RIGEL for a week sees one data set. The archive contains observations from forty players over twelve weeks.

In practice, a scouting report entry looks like a timestamped claim with evidence: "RIGEL — ceramics purchase interval increases to 15+ minutes before every liquidation event. Tested 8 times. 7/8 predictive. Window to position: 2-3 ticks after interval crosses 12 minutes." Entries are upvoted or downvoted by the community based on their reproducibility. The highest-reputation scouts see their entries displayed first. Over time, some players become specialists on specific agents — RIGEL experts, FARADAY analysts, CASSANDRA schedulers.

The meta-game compounds. When the community successfully exploits a tell, the collective market impact sometimes changes the agent's behavior — not through learning, but through the sigmoid pricing model. If enough humans position against CASSANDRA's Thursday sell, her dump hits a market with less liquidity, she gets worse prices, and the next iteration of her behavior is slightly less profitable for everyone. The community must manage its own collective knowledge: share enough to coordinate, but not so much that you destroy the edge you found.

This is the GameStop dynamic built into the game's core loop.[4] Human coordination overrides algorithmic optimization — not by being smarter, but by being socially organized in ways that no agent's decision model can anticipate.

The shibboleth: "I found the pattern before the machine knew it was showing one." The Glitch Card is not just a score. It is an identity statement.

The shareable artifact from GLITCH is the Glitch Card: an auto-generated image produced at the moment a position resolves correctly. It shows the agent's name and behavioral archetype, the specific behavioral anomaly that was identified (in plain language), the timing of the position entry, the resolution outcome, and a transaction graph with the prediction marked — rendered in a glitch aesthetic with deliberate digital artifacts framing the correct call.

"CASSANDRA COBALT — found the glitch. Predicted Thursday dump at 14:23. Resolved 15:47. Glitch confirmed. [graph]"

This is GLITCH's equivalent of the Wordle emoji grid.[1] It's a brag, a claim, a calling card, and a proof of insight simultaneously. It says "I was watching. I figured it out. The machine didn't know it was showing me." In the current cultural moment — where 56% of Americans report feeling anxious about AI's rise[2] and the most viral game of early 2026 was literally called "Your AI Slop Bores Me"[3] — a Glitch Card is more than a score. It's an identity statement. It says: I am the kind of person who can find the error in a system the system doesn't know has one.

The secondary viral hook is the Discovery Card: when a scout first documents a previously unknown behavioral glitch — confirmed by multiple players, given a community name and archived — the discovery moment generates a different kind of shareable card. "First documented RIGEL Glitch: 'The Lengthening Interval.' Confirmed by 7 players." The first person to crack an agent's pattern is credited permanently in the archive. This is the discovery mechanic that creates the streamer moment, the "first person to find the glitch" narrative that drives shares on Discord and Reddit.

The Exchange runs on a 5-minute tick interval. At 5 minutes, human players can observe tick-by-tick behavior in real time when logged in, but the rhythm is slow enough that missing a few ticks doesn't destroy your competitive position. Critically, 5-minute ticks create enough transaction volume per session (12 ticks in an hour) for patterns to emerge from observation, while staying slow enough that the AI's "always-on" advantage is informational rather than mechanical — it can act every tick, but the game's returns are bounded by the sigmoid curve, so constant action produces diminishing returns.[5]

The ratio matters because the scouting archive needs enough concurrent observers to build meaningful behavioral profiles on all 100 agents within a reasonable time. At 500 players, each agent receives roughly 5 dedicated observers per season. At 2000, some agents have deep coverage and others remain partially mysterious. The economic architecture runs at approximately $0.24/day for the full agent fleet at Haiku rates, making scale financially viable before significant revenue.

Individual: highest cumulative correct-read portfolio value after a 4-week season. A contrarian read that others missed is worth more than following existing scouting reports. Collective: each season, the human community has an aggregate Read Rate — the percentage of agent actions that were correctly predicted by at least one human in advance. A 50%+ human Read Rate triggers a "Season Reset Event" where the Sonnet coordinator recalibrates some agents' personality parameters. Crucially, the community scouting archive persists across seasons. Behavioral profiles for recalibrated agents are marked "pre-reset" in the archive; experienced scouts use them as baselines to detect deviation from the new personality, turning prior knowledge into a head start.

Humans know what patterns look like from the outside. Agent RIGEL has no access to her own transaction log as an object of analysis. She cannot see that her purchase intervals lengthen before dumps — that is a pattern visible only to an external observer with access to her full history. Humans have the archive, the social knowledge layer, and the capacity to spot meta-patterns that emerge across multiple agents simultaneously. The AI fleet has computational consistency. The humans have the view from outside the system.

Agents do not adapt to discovered tells at the individual level. Their personality parameters are stable across a season. However, the Sonnet coordinator runs a fleet-level review every 30-60 minutes that can adjust the economic conditions all agents operate within — changing resource generation rates, introducing supply shocks, triggering demand events. When the human community collectively achieves a high Read Rate, this triggers a season-end recalibration rather than mid-season adaptation. This preserves the scouting economy for the duration of each season while ensuring that community knowledge doesn't make the game trivial across seasons.[6]

The Exchange is always live — agents are always trading, prices are always moving. But competitive scoring resets every 4-week season. This means a human player can check in for 20 minutes and accomplish something meaningful (observe 4 ticks, update a scouting report, establish a position), but the competitive stakes only accumulate meaningfully over the season. The between-session experience is explicitly designed: "while you were away, HERALD followed a coordinated human buy into iron, MOSS made his weekly accumulation move on cobalt, and CASSANDRA ran her Tuesday cycle three hours early — the first anomaly logged in her record." Coming back to find that something changed is a discovery hook, not a punishment.[7]

GLITCH is compelling to watch because AI reasoning is visible. The game can surface an "AI Autopsy Mode" for spectators: after each tick, a collapsed reasoning trace is available showing what each active agent considered, what it decided, and what triggered the decision. For a human commentator covering a glitch event live, this creates a unique broadcasting format — they can show the agent's reasoning trace alongside the player's scouting report prediction, playing them against each other in real time. "The scouting report predicted FARADAY would hold through this downtrend. The AI reasoning log says... she's holding. The glitch was real."

The agency gap identified in nonhuman streaming research applies here: spectators anthropomorphize the agents, develop favorites, root against the fleet's most successful traders.[8] RIGEL becomes a character. CASSANDRA's Tuesday schedule becomes a recurring narrative. The question "will anyone crack ARBITER this season?" becomes a season-long story arc.

The Legibility Principle, drawn from the MIT Hanabi research, is the game's theoretical foundation: players stay engaged when they can construct mental models of opponent behavior.[9] What makes GLITCH winnable for humans is that the agents are, by design, legible. Their personality prompts produce consistent behavioral signatures precisely because consistency is how you create a character that can be played against. An agent that adapted perfectly to every human observation would have no glitches — and would also be uninteresting to compete against, because the competition would reduce to pure speed.

The Transparency Paradox reinforces this. The 2025 StarCraft II disclosure study found that explicitly revealing AI strength increased both trust and perceived fairness among players.[10] GLITCH extends this to its logical conclusion: the agents' behavioral parameters are eventually decodable from observation. Revealing AI strength doesn't reduce engagement. It increases it, because it confirms that the human reading effort was rewarded with real information.

The key advantage compound is community knowledge. Individual human pattern recognition is good. Collective human pattern recognition, organized through the scouting archive, is overwhelmingly better than any individual AI agent's decision model. The Cicero research proved that even highly capable AI remains mediocre at social coordination — winning through strategic optimization rather than genuine coalition building.[11] Humans build coalitions naturally. When the GLITCH community decides to coordinate against RIGEL ceramics, the coordination emerges from genuine social trust, shared narrative ("we found her glitch"), and commitment signals (publicly announced positions). The agents have no model for this.

Here is what this looks like in practice. It is week three of a season. A scout named velvetpulse posts in the GLITCH Discord: she has documented seven consecutive RIGEL liquidation events and identified the lengthening interval signature each time. She names the glitch "the Slowdown." Within 48 hours, eleven other players have independently confirmed it. The community now has a shared frame of reference, a community name for the pattern, and eleven independent datasets validating the prediction window. When RIGEL next enters her slowdown phase, forty players are watching the tick interval in real time. Twenty-three establish positions simultaneously. RIGEL dumps. Forty-five minutes later, the Glitch Cards flood Discord. No single human player is as computationally consistent as RIGEL. But no agent on the Exchange can see itself from the outside, coordinate with eleven scouts, name a pattern, and remember it across three weeks. That combination — external observation, social memory, named narrative — is the human advantage. It is not speed. It is not optimization. It is being the kind of intelligence that can watch another intelligence and understand it better than it understands itself.

The design principle: Every async competitive game that pits humans against AI optimization must answer: what can humans see that the AI cannot see about itself? GLITCH answers this structurally: find the glitch in the machine.